AutoLink: Self-supervised Learning of Human Skeletons and Object Outlines by Linking Keypoints

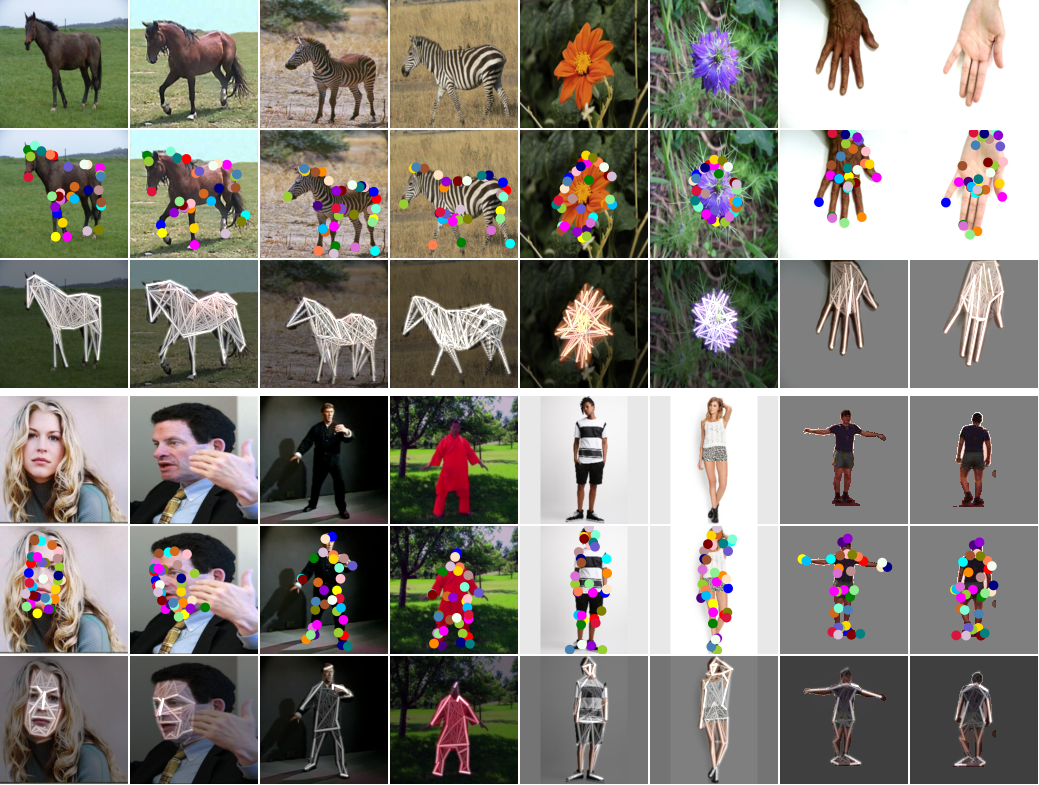

Structured representations such as keypoints are widely used in pose transfer, conditional image generation, animation, and 3D reconstruction. However, their supervised learning requires expensive annotation for each target domain. We propose a self-supervised method that learns to disentangle object structure from the appearance with a graph of 2D keypoints linked by straight edges . Both the keypoint location and their pairwise edge weights are learned, given only a collection of images depicting the same object class . The graph is interpretable, for example, AutoLink recovers the human skeleton topology when applied to images showing people. Our key ingredients are i) an encoder that predicts keypoint locations in an input image, ii) a shared graph as a latent variable that links the same pairs of keypoints in every image, iii) an intermediate edge map that combines the latent graph edge weights and keypoint locations in a soft, differentiable manner, and iv) an inpainting objective on randomly masked images. Although simpler, AutoLink outperforms existing self-supervised methods on the established keypoint and pose estimation benchmarks and paves the way for structure-conditioned generative models on more diverse datasets.

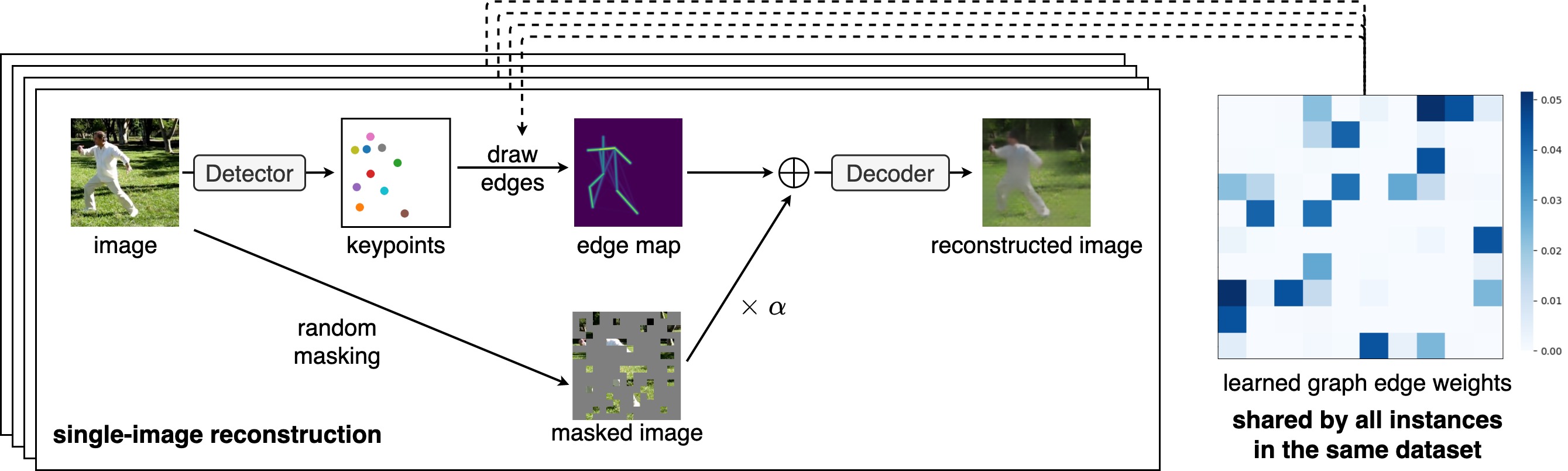

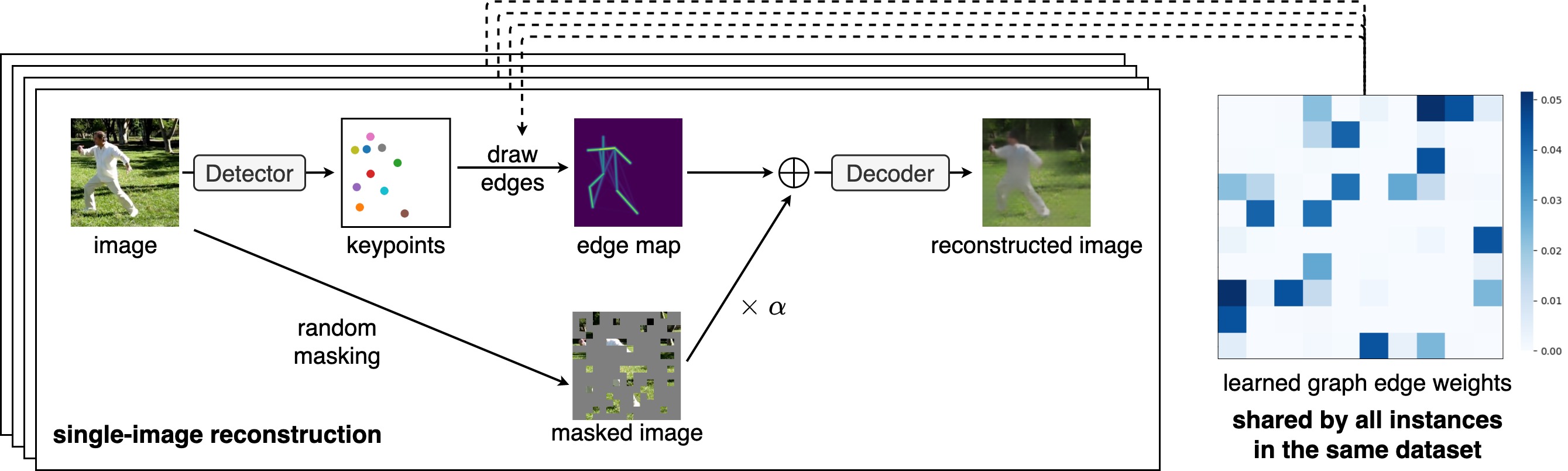

Given an image, we detect keypoints and draw differentiable edges between keypoints according to the learned graph edge weights that is visualized as a color matrix. The method is self-supervised in that the latent edge map and keypoints are learned by reconstructing the masked input images. Note that keypoints are image specific and edge maps are shared.

The learned graph representation can be used to train a pose transfer network on videos. Note that the graph is only learned on a collection of single images, and so is the translation model. Nevertheless, it is stable even when the pose change is large (first row), and the subtle details, such as mouth motion (third row) and eye blinking (forth row), are also captured.

The learned graph representation can be used to train a conditional GAN. In this experiment, we trained a single detector and GAN on AFHQ, where multiple animals are trained at the same time. This demonstrates the robustness to shape variations and capability of learning a shared animal head model.

@inproceedings{he2022autolink,

title={AutoLink: Self-supervised Learning of Human Skeletons and Object Outlines by Linking Keypoints},

author={He, Xingzhe and Wandt, Bastian and Rhodin, Helge},

booktitle = {Advances in Neural Information Processing Systems},

year={2022}

}